It seems like every company these days has two things they turn to when first hitting an incident. a) Is there an Alert Reference / Runbook / Whatever (the actual term depends on who you talk to), and b) Is there a dashboard that can tell me exactly what’s wrong. In this post, I’m going to cover why these tools are not only unhelpful, but are actively harmful to your incident response. Let’s get into it.

Background

For those of you who haven’t encountered these tools, I’ll give a bit of a brief intro about what they are. Alert References (I’m just going to call them that, you know what I mean) are a set of instructions to how one should respond to a given alert/incident. Look at this, restart that, toggle the quantum resonator, whatever. They’re meant to take a lot of the guesswork out of what can be a stressful situation. That’s fine. Dashboards are similar - generally coming in the Grafana flavor (although I love a good rrdgraph every now and then), they provide a broad overview of potentially relevant numbers in the form of graphs over time, some relevant logs, generally some summary of information that might be useful.

Engineering teams like these constructions because they feel good. Every time an incident is over, an Engineer can walk away from it thinking “if only I had seen information X first”. They then go write an Alert Reference that tells another Engineer exactly how to solve that incident, build a dashboard with all the relevant information for that incident, and then feel good that if it happens again then they’ll immediately be able to know what is wrong, solve it immediately, and look like the all knowing deity that they know themselves to be.

Let’s extend that

Let’s consider the logical extension of that behavior. Let’s say, conservatively, that you have an incident once every two weeks. If we assume that every incident is unique (and we’ll get back to that later), then after a year of repeating the incident -> Alert Reference + Dashboard flow, you’ll have 26 dashboards and Alert References. So what happens when our next incident rolls around? Are we expected to check every Alert Reference and dashboard? You can see how that would be problematic - as our number of incidents increases, the number of dashboards and references we have to check increases and thus the time we take to check all of them increases as well. All that time spent looking at dashboards for hints is time spent not actually looking at the problem. Now of course, this is a pretty contrived example. You’re not going to be checking every dashboard every time, but you’ll be checking some subset of them! And therein lies the problem. Alert References and Dashboards require some non zero time investment to investigate during an incident, where you’re probably better off just looking at the raw data to find correlations.

Why are they useful?

Let’s consider the opposite - a world where Alert References and Dashboards are useful. They allow you to immediately identify the problem and give you precise steps to solve it. A world that I’m sure some of you live in (or at least, you think you do). I’m sure some of you are scoffing at this post thinking “but Colin, we use dashboards every day!” and I’m sure you do. But at this point I would like to raise the counter point: Why are your Alert References and dashboards useful?

If you have an Alert Reference that tells you exactly how to solve a problem, why haven’t you identified the actual root cause, or at the very least Automated the Runbook? Similarly, if you have a dashboard that immediately identifies an exact problem, why does that problem continue to exist?

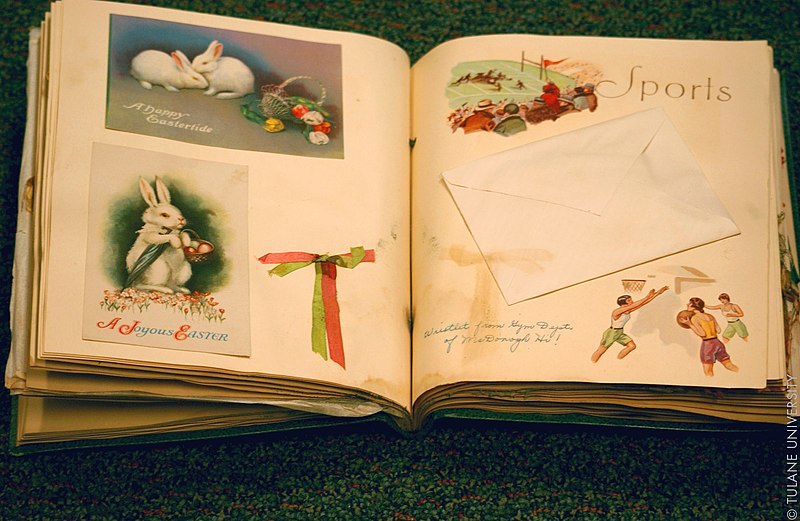

It’s for this reason that I call dashboards and Alert References “scrapbooking for engineers” - they serve the same purpose. Dashboards and Alert References serve to memorialise past incidents, more than they provide actual operational value. In the same way that one might use a scrapbook to preserve a memory, one uses a dashboard to preserve the memory of an incident. These one are particularly insidious however, because they feel like they’re helping. If they didn’t provide any value (at least on the surface) then we wouldn’t be investing such a large amount of time and energy as an industry building and maintaining them, so the question naturally tends to why do they feel like they’re useful? I think theres a few reasons.

Information Gathering

Firstly I think that the value of these constructs is not in having them for an incident. As we’ve covered, having them in an incident can actually hinder your responsiveness by providing a mire of information to wade through before actually investigating. I think that the value is in building them in the first place - using them to organise your thoughts, and to document your investigations into the cause of an incident.

As a Creative Outlet

Secondarily, I think that creating Dashboards feels good to engineers. It allows us to utilise some level of creative control via something with an immediate visual impact, which is often not the case, particularly in systems engineering where one might work on backends all day. Getting to create something that not only looks nice, but is also practical in some sense gives a rush of being productive. I mean, just look! We’ve got a thing that we’ve accomplished that can be easily shown to other people.

So what can we do?

We need to shrug off this idea that these tools are useful in the long term. We would be better served by these tools if they were treated as transient. Rather than a scrapbook, treat them as a notebook - used to quickly take notes before being forgotten once the problem is solved.

If they must be kept around, then they should only be around as long as it takes you to a) find the root cause of that alert and fix it, or b) automate it. That’s it. Once you’ve done that, they have served their purpose and you should get rid of them. Keeping them around any longer is tech debt, maintenance effort, confusion, and most importantly time that could be better spent elsewhere.

If you do have a backlog of Alert References and dashboards, it would be useful to start traking their usage - if you’re not repeating incidents then those old tools should be receiving no traffic at all, and if you can prove that then you would be well served to just delete them. Not only will this reduce your overall maintenance burden in keeping them around, but it will also reduce the mental overhead of checking a dozen things every incident.

Wrapping this up

To wrap this up a bit, I think that long lived Dashboards and Alert References are a scourge. They’re easy to get sucked in to, and before it’s too late you’ve accrued a large amount of tech debt, and a maintenance burden that doesn’t provide the operational benefits that we’re led to believe that it does. They serve to memorialise past incidents, as if those incidents are an indication of future ones, which, if we’re doing our jobs correctly, shouldn’t be the case. If that is the case, then you should revisit why you’re having so many recurring incidents.

If instead as an industry we transition to using Dashboards and Alert References purely as transient information gathering while proper solutions are worked on then we will actually increase our operational efficiencies, rather than relying on these tools as a crutch to avoid actually solving problems.

I'm on BlueSky: @colindou.ch. Come yell at me!